- Blog

- Cisco spark for mac download

- Chromatic scale piano

- Dock it baby

- Adobe premiere certification

- Smartcraft sc1000 system monitor

- Ask electrical engineers say about battery pulse desulfator

- Eero router login ip

- Aural learner definition

- Cross dj free bluetooth headphone

- Iphoto library manager remove duplicates

We know very little about the effects of natural animal signals on inducing emotions in other animals, a point we will try to address below. Rather, they are using specific signal types to induce a form of emotional contagion in their listeners. In the case of humans who are attempting to manage the behavior of infants and animals, the speakers need not be directly experiencing the emotion they are trying to induce. Prosody can be used to induce behavioral changes in others. The communication of affect through voice is not unique to humans, and the acoustic structures involved must have similar effects on the nervous system of both human infant and animal recipients. The convergence of signal structure that humans use to communicate with both preverbal infants and nonhuman animals suggests that these signals are effective across species. Interestingly, similar features appear in the calls and whistles used by humans to control the behavior of working animals (dogs and horses) ( McConnell, 1990, 1991). These patterns were observed across speakers of several different languages. Long descending intonation contours have a calming effect, and behavior can be stopped with a single short plosive note. Several short, upwardly rising staccato calls lead to increased arousal. A clear test of this is in studies of communication between human parents and preverbal infants, where specific prosodic (musical) features have been identified that can influence the behavioral state of the infant ( Fernald, 1992). It seems quite likely that we detect emotional signals more clearly through pitch and intonation contours than we do through actual words. Thus, the harmonic structure of speech closely parallels that of music across cultures, and affective changes in emotion are evident in different harmonic structures of speech just as they are in music.Ī second source of music in language is prosody-the intonation contours of speech. This was particularly noteworthy with respect to major and minor thirds. (2010) sampled speech spectra from excited versus subdued speech and found that the spectral distribution of excited speech showed similarities to the distribution of major intervals, whereas the spectral distribution of subdued speech matched the spectral pattern of minor intervals. The authors suggest that humans prefer tone combinations that reflect the spectral relationships of human vocalizations. Gill and Purves (2009) showed that the most widely used scales across time and across cultures are those that are similar to harmonic series. (2011) examined music and speech from three tonal languages and three nontonal languages and found that changes in pitch direction occurred more frequently and had larger changes in pitch direction in tonal languages, and that the music typical of the cultures with tonal language also showed similar frequent and large changes in pitch direction, suggesting a coevolution of music and language. The authors sampled not only English speakers but speakers of Tamil, Farsi, and Mandarin and found similar relationships within each language.

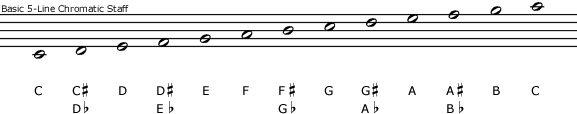

Peaks in the distribution were especially prominent at the octave, the fifth, the fourth, the major third, and the major sixth forming the intervals of the pentatonic scale and most of the intervals on a diatonic scale ( Schwartz et al., 2003). This appears to be a direct result of the resonances of the human vocal tract, and suggests that music and speech are closely linked.

#Chromatic scale piano series

In a series of studies, Purves and collaborators have shown that the statistical structure of human speech shows a probability distribution with peaks at frequency ratios that match the chromatic scale.

Human vowel sounds are based on the chromatic scale. Eckart Altenmüller, in Progress in Brain Research, 2015 5 Music and Emotion in Human Speech and Parallels in Other Species

- Blog

- Cisco spark for mac download

- Chromatic scale piano

- Dock it baby

- Adobe premiere certification

- Smartcraft sc1000 system monitor

- Ask electrical engineers say about battery pulse desulfator

- Eero router login ip

- Aural learner definition

- Cross dj free bluetooth headphone

- Iphoto library manager remove duplicates